Recent searches

Search options

I entered the #ComputationalLinguistics field in 2018 by enrolling for a Bachelor's degree.

Since then, a lot has changed. Almost all the things we learned about, programmed in practice and did research on are now nearly irrelevant in our day-to-day.

Everything is #LLMs now. Every paper, every course, every student project.

And the newly enrolled students changed, too. They're no longer language nerds, they're #AI bros.

I miss #CompLing before ChatGPT.

Remember when we programmed "conversational robots" (like Siri or Alexa) in a way that we could actually control exactly what is being parsed, understood, and output?

When we could manually decide that if the user asks for the weather, the device is going to consult a weather data API as an actual real vetted information source?

When we programmed them to be *useful* for *tasks* and *information retrieval*, rather than being amazed that they're "talking" in a *convincing* manner?

It's crazy!

If you told one of our robots "hey, please call Lucia but before that see whether her birthday collides with anything on my calendar", it would actually work!

And we could look into the state of the machine and see what it interpreted your intent to be.

Something like "Get date:'birthday' from contact:'Lucia' and check events @ calendar:date, ask user confirmation, then call contact:'Lucia'".

It *had* understanding, context, knowledge. We threw that all out for a word generator!!

@lianna I thought similar for a while and I have always wondered why higher levels of machine learning or LLMs are not used to improve on that concept. After all, that IS the number one problem with generative AI: It does not have concepts. And really, while I do not think that the hard-coded Siri etc were great ("Sorry, I did not understand that"), I think those concepts should have been iterated and enhanced / improved instead of replaced.

@wildrikku @lianna then again, people already bought stupid Siris and Alexas in masses, so I guess demand is sufficient, why invest in a good product when you can sell a bad one...

@wildrikku In academia, we were already at a point where those "sorry, I didn't understand that" type situations rarely happened, and if they did, they would give you more helpful troubleshooting information and a less infuriating error response.

It's just that all of that progress suddenly was cut – sometimes literally, cutting off the funding for ongoing research – once ChatGPT came out.

@lianna I miss parsing, semantic tagging, translational memory, lattice transforms... Hell I miss the incessant overtuning to the Penn Tree Bank corpus

It's all been mulched into a pile of perceptrons, representation is latent, interpretation is hidden, answers without question, questions without answers

@the_dot_matrix hey, i like perceptrons :(

@lianna true, I shouldn't blame the actually useful foundations of ML for the Akira-esque way they've been wired up

@the_dot_matrix My current ""weekend"" project is setting up an old laptop I got from some jumble sale with some contemporary (~2001?) Linux distribution, and using that authentic early 2000s system to program a language detection classifier perceptron by hand.

No libraries, no IDEs, no internet, just pure bliss.

@lianna if you're willing to share more about it, I'd love to see it. This area is not my forte, but I appreciate learning about it c:

@the_dot_matrix Once I start the project for real, I want to make some videos about it tracking my journey. I'll make sure to post them here. :)

@lianna just brainstorming here, I think I would like a mix of approaches...

an LLM (stritcly on device processing, trained ethically) that consumes vetted weather(or traffic, or wikipedia entries) data from API endpoints that I choose manually. Maybe there's some "Sources Manager" application that connects these two things but every part is interchangeable.

@afreytes Some people seem to do just that, using LLMs as an output replacement.

https://link.springer.com/chapter/10.1007/978-3-031-60615-1_2

It might be a good idea to make robot speech more authentic, lifelike and convincing.

Some research however funnily enough suggests that robots being human-like isn't actually what you always want. People may mistrust emotional or polite robots and find them annoying because they know it's "fake", programmed.

Some users need not human-like language, but clear precision and predictability.

@afreytes

The thing is that even researchers themselves are not immune to the highly contagious AI hype.

Reputable publishers publish articles that practically trip over themselves with unscientific and imprecise wording, inferences and predictions.

Check this one, even in the intro:

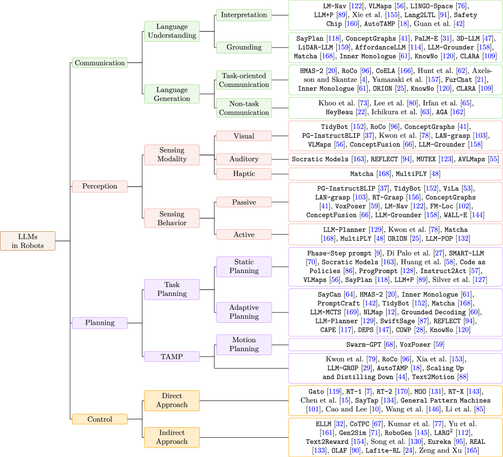

https://link.springer.com/article/10.1007/s11370-024-00550-5

"[LLMs enable] robots to communicate, understand, and reason with human-like proficiency"

... really? No, they don't. They appear to do so, but they're not.

@lianna I agree with you. AI != robot (also "AI" is not AI), and those two combined aren't comparable to human anything.

What I was thinking was, that I like Siri, Cortana, as interfaces that I know are limited but could be expanded with LLM like "language" and summarization. But instead of something useful like that the hype just rolled(trampled?) over everything.

@lianna LLMs really flooded the zone with shit, but even in 2018, things felt in decline.

One thing frustrating was everyone using BERT as a baseline, then comparing with their model, then calling it a day.

Was BERT actually good at this task? What if I did something simple, like a majority class baseline, or a tf-idf vector model? These kind of contextualizing baselines were abandoned, and it’s only gotten worse since.

@thedansimonson I think part of the reason is the constant push to publish.

No wonder people are taking shortcuts just to get something out.

If my job and therefore my livelihood depended on it, of course I would choose the "easy way" and just do a throwaway "we tried a new hyperparameter and here's how it compares to <insert popular model>" thing too.

@lianna maybe it’s inevitable to feel this way.

Roger Schank—an AI researcher from the 70s—used to always post on Twitter cranky posts about how statistical NLP wasn’t real “AI.”

So maybe I’ve just made my cranky old man turn.

@lianna I'm not even in Computational Linguistics, but I relate on a deep level. There is a sense of loss in all this hype.

@lianna this is just a phase. computational linguistics will come back afterwards and investigate language again. rule-based and statistical methods are not dead, they are just hibernating.